Cybersecurity Is About to Get Wild. Here's What Leaders Need to Know

The Calm Before the Storm

There is a particular feeling that experienced cybersecurity professionals get just before a bad year. A sense that several things are lining up at once, and that the ground is about to shift.

Our CEO and co-founder Brian Gallagher has that feeling now, and he is not the kind of person who panics easily. With 45 years of experience in IT and cybersecurity, he has lived through the Morris Worm, Code Red, NIMDA, SQL Slammer, Conficker, Heartbleed, WannaCry, NotPetya, and SolarWinds. Each of those felt, at the time, like the roof was coming off.

His latest piece on his personal blog, Pressure Tested, carries a blunt title “Cybersecurity's 2026 Wild Ride”. His view is that what's coming will be worse than any of those previous events, not because any single one will be the largest, but because we are about to face waves of them at the same time.

And then, on the far side, things will be more secure than they have ever been.

"We are about to enter the wildest period of cybersecurity we've ever seen. Yes, this sounds like hyperbole. It isn't. On the other side of it we will be more secure than ever."

Brian Gallagher, CEO, LEMA Logic

This article distils Brian's analysis for non-technical leaders. The full version, with the technical depth and references, lives on Pressure Tested.

What Has Actually Changed in 2026

For decades, finding a serious bug in widely-used software took elite human researchers months or years of patient work. That was the natural rate-limiter on cyber attacks. The economics of attacking most organisations simply did not work, because the cost of finding and exploiting a useful vulnerability was higher than the payoff.

That has changed. AI now makes it dramatically cheaper and faster to find serious bugs in widely-used software.

A new generation of AI models like Anthropic’s Mythos and OpenAI’s Cyber can now find decades-old vulnerabilities in widely-deployed software in hours instead of years. Mythos recently scored 100% on professional cybersecurity challenges that the best AI scored 0% (yes, Zero Percent) on the year before. It found a 27-year-old bug in OpenBSD, a 16-year-old one in a video codec used in nearly every video pipeline on Earth, and a 17-year-old remote code execution vulnerability in FreeBSD. Over 99% of the thousands of high and critical severity vulnerabilities it surfaced remain unpatched.

The bugs aren't new. They have been sitting in our software the whole time. AI didn't just give us a flashlight to help find them. It gave us a floodlight, bright enough to see them all.

The problem is that the same floodlight is also available to attackers.

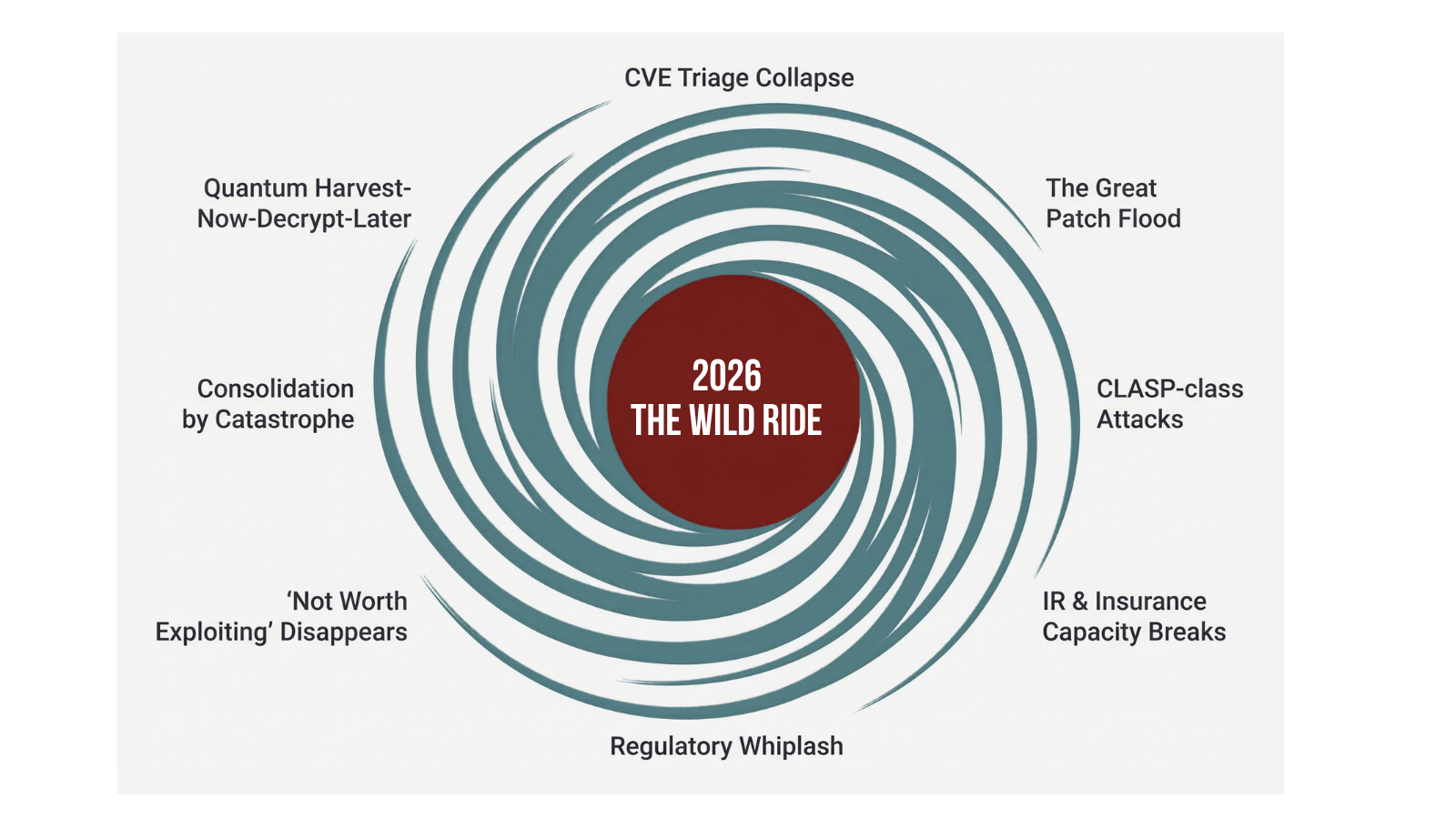

Eight Things Converging at Once

Brian's blog, Pressure Tested, sets out eight separate dangers that he predicts will all mature in 2026, with his references and supporting evidence linked throughout. Most leaders will not have considered most of these. Briefly:

1. The CVE triage system is about to be overwhelmed. This is the global early-warning system for software vulnerabilities. It was already strained. Patch cycles are accelerating from 30 days to 14 to 7 to "deploy on disclosure," and still cannot keep up.

2. A wave of AI-surfaced vulnerability disclosures is about to flood maintainers, regulators, and security teams. Many will be in software that cannot easily be patched, including routers, industrial control systems, and embedded firmware.

3. A novel attack pattern called CLASP weaponises the patching process itself. Brian disclosed this earlier this month to the Isle of Man Cyber Security Centre, the UK NCSC, CISA, and CERT/CC. Organisations with the most disciplined patching processes are the most exposed to it. The full disclosure is at clasp.info, and we have published a separate post about it.

4. Cyber insurance and incident response capacity will fail exactly when everyone needs it at once. Premiums are already rising. Coverage exclusions are already being written.

5. Regulatory whiplash is likely, as governments respond faster than organisations can comply.

6. The "not worth attacking" middle disappears. Small banks, regional utilities, supply chain SaaS vendors, local government systems, and other organisations that were previously below the economic threshold for attack become viable targets at scale.

7. A wave of consolidation. Organisations that survive do so by being prepared or being lucky. Some will not survive.

8. Quantum computing is on the horizon. Adversaries are already harvesting today's encrypted traffic to decrypt later, when they can. Anything with a long shelf-life, including signing keys, credentials, intellectual property, confidential personal data, and diplomatic and legal communications, needs to migrate to post-quantum encryption before it goes on the wire, not after.

"What's coming is a bunch of these, all at once, sustained across months."

Brian Gallagher, CEO, LEMA Logic

Faced with all of this, the temptation is to invest in more of what we already have. More walls. More patches. More monitoring. Brian's argument is that more of the same is exactly the wrong response.

The Mindset Shift

The single most important change is not technical. It is a shift in how leaders think about security.

For decades, the working assumption has been that security is mostly a defensive activity. Build the walls high enough, patch fast enough, train staff well enough, and most attacks bounce off. That model still has value, but new attacks like CLASP turn the patching process itself into the weapon that delivers the payload at speed and at scale.

The new posture Brian recommends is "assume compromise." Not as a worst-case plan, but as the baseline operating assumption. Build everything so that any given component or upstream dependency could be compromised at any moment without taking your business down.

This changes which investments matter. Marginal prevention spend has hit diminishing returns. Marginal recovery spend has not. The question shifts from "have we prevented X?" to "how fast can we recover from Y?"

That single reframe changes every downstream funding decision.

What This Means at Board Level

This mindset shift is not an IT problem. It is a board and enterprise continuity problem. If a board is not tracking cyber posture the way it tracks revenue and cash runway, the board is asleep at the wheel.

Brian's piece argues that the next board meeting at any serious organisation needs to cover, at minimum:

Whether offline, physically-disconnected backups exist, are rotated regularly, and have been tested by rebuilding production systems from bare metal in the past quarter

Which senior security, infrastructure, and platform engineers the organisation cannot afford to lose, and what it would cost to retain them

Whether the cyber insurance policy actually covers a simultaneous, AI-orchestrated attack across the organisation's full infrastructure, or whether exclusions will leave the organisation paying its own claim

Whether the recovery plan still works if the cloud provider, the incident response retainer, the managed detection service, and the AI tools the organisation depends on are all overwhelmed at the same time

Critical data held in third-party SaaS platforms, and whether the organisation can operate for a week without each of them

These are not solely technical questions. They are governance questions first. The technical teams can answer them, but only the board can decide whether to fund the answers.

All of this can sound bleak. It is not. The other distinctive thing about cyber crises is that they end, and they tend to end in better infrastructure than they started with.

Better on the Other Side

The Morris Worm gave us CERT/CC. Code Red and NIMDA gave us Microsoft's Trustworthy Computing initiative, which rewrote how a generation of software was built. Heartbleed funded serious audits across open-source libraries. SolarWinds pushed the industry toward Software Bills of Materials, signed builds, and zero trust architectures.

Each of these crises hurt at the time. Each of them produced a structural fix that became the new baseline.

The 2026 pressure is squarely on shared infrastructure: software libraries, build pipelines, certificate authorities, AI tooling, and the patching ecosystem itself. That is the kind of pressure that has historically produced broad, industry-wide improvements rather than narrow, one-off responses.

"While AI accelerates attack, it also accelerates defence. In the long run, defenders have the advantage. They have the source code, the telemetry, the deployment pipeline, and the same tools that the attackers are using."

Brian Gallagher, CEO, LEMA Logic

The good news is that this will not last forever. The bad news is that we are in it now, and most organisations have not noticed yet.

What To Do This Week

If you are a leader reading this, the most useful thing you can do right now is share it with the people who most need to see it. Board members, CFOs, COOs, and senior leaders who are not in the security conversation today. They will be soon. Forward this article. Get the conversation started before the first wave lands.

Then, if you want to go deeper, Brian's full piece on Pressure Tested gives you the technical context. It is detailed, it is honest about uncertainty, and it gives you the language to take the right questions to your team.

If you would like to discuss what this means for your organisation specifically, book a discovery call with LEMA Logic. We work with leaders across the Isle of Man, the UK, and the US to translate cybersecurity and AI strategy into governance decisions that boards can actually act on.

Brace yourself. It is going to be a wild ride. But on the other side, we will be more secure than we have ever been.

Related reading:

The CLASP Attack Pattern, Weaponising Patching Best Practices (LEMA Logic press release)

Cybersecurity's 2026 Wild Ride (Pressure Tested, the full piece)

The CLASP Attack Pattern (Pressure Tested)

clasp.info (full security advisory)