Why Most AI Training Fails in Organisations

Organisations are investing in AI training, but most are seeing little return. The problem is not a lack of content: there is no shortage of courses, platforms, or self-paced learning libraries. The deeper issue is structural: most training targets individual AI skill without addressing organisational AI readiness.

Research reinforces this. Self-directed online courses have completion rates below 15%, and workers gain an average 33% productivity boost per hour of generative AI use, yet most corporate AI training is unstructured, undertaken outside working hours, and disconnected from implementation.

So how do you ensure your AI training is efficient, practical, and delivers real ROI? This article examines why AI training so often fails to deliver, what the research says about genuine productivity gains, and what structured, protected-time AI workforce development actually looks like in practice.

Why Most AI Training Fails in Organisations

1. AI is Infrastructure, Not a Vertical

3. The Completion Rate Problem

4. Personal AI Capability vs. Organisational AI Readiness

5. The Two-Score Diagnostic Matrix

6. What the Research Says About AI Productivity Gains

1. AI is Infrastructure, Not a Vertical

There is a persistent tendency among business leaders to treat artificial intelligence as a department-level concern: something for IT to manage, or a topic on the innovation team's quarterly roadmap.

This framing is a mistake, and it often drives failed AI adoption strategies.

AI is infrastructure, closer in nature to electricity or the internet than to a new software product. When electricity was introduced to factories, the businesses that benefited most were not those that simply installed electric motors; they were the ones that redesigned their operations and retrained their workforce. The same principle applies to AI.

Stanford's 2025 AI Index Report documents that 78% of organisations now use AI in at least one business function, up from 55% the year before.

Adoption alone does not equal capability, however. Without structured workforce development, organisations are installing the wiring without training anyone to use it.

Key Principle

AI capability spans every function, from finance to marketing to operations, and workforce development must reflect that breadth.

2. Why Most AI Training Fails

The majority of corporate AI training doesn't underperform because of a lack of educational content. Platforms like Coursera, LinkedIn Learning, and Udemy offer thousands of AI courses. The failure is structural, and it occurs for three compounding reasons.

Training is treated as personal development, not operational investment.

Many organisations frame AI training as a benefit: something employees can pursue in their own time. This shifts the burden of upskilling onto individuals already managing full workloads. There is no protected training time, no structured pathway, and no connection to operational reality.

Individual skill is developed without organisational context.

An employee who completes a prompt engineering course returns to a workplace with no AI governance, no process mapping, and no leadership alignment. Their new capability has nowhere to go. The OECD's 2025 policy brief on the AI skills gap confirms this: the vast majority of workers exposed to AI require not specialist technical skills, but general AI literacy; yet most available training still skews toward advanced AI skills rather than broader workforce capability.

Training is disconnected from implementation.

Knowledge without application decays rapidly. Research suggests the half-life of professional skills has shortened to under five years, with AI accelerating that compression further. AI training that is not immediately connected to real workflows is unlikely to produce lasting change.

3. The Completion Rate Problem

The evidence on self-directed online learning completion rates is stark.

A comprehensive analysis of 221 MOOCs published in the International Review of Research in Open and Distributed Learning found a median completion rate of just 12.6%, with many courses falling below 5%. Subsequent research by Reich and Ruipérez-Valiente, analysing Harvard and MIT courses on the edX platform from 2012 to 2018, found no meaningful improvement over six years, with 52% of registrants never having engaged with course materials at all.

These figures should give pause to any organisation relying on self-paced online learning as its primary AI upskilling strategy. When training is optional, unstructured, and done in personal time, the vast majority will not complete it.

This is not a motivation problem; it is a programme design problem.

Structured, employer-supported programmes with protected time, certification incentives, and cohort-based delivery achieve dramatically higher rates: the University of Michigan found that participants pursuing certification spent 10% more time on course materials and maintained engagement through to completion at significantly higher rates.

Format matters as much as content. If organisations are serious about building AI capability, they must invest in structured, multimodal, and time-protected learning, not merely access to self-paced content libraries.

Understanding why individual training fails is only part of the picture. Effective AI capability development spans two distinct dimensions.

4. Personal AI Capability vs. Organisational AI Readiness

Effective AI adoption requires proficiency across two distinct but interdependent dimensions. Most training programmes address only one. Understanding both matters for any AI adoption strategy or workforce development initiative.

What is Personal AI Capability?

Personal AI capability refers to an individual's proficiency in using AI tools effectively within their professional role. This includes the ability to construct effective prompts, select appropriate AI tools for specific tasks, critically evaluate AI-generated outputs for accuracy and fitness, and integrate AI into existing workflows to improve speed and quality. This is the competence an individual brings to the human–AI interaction.

What is Organisational AI Readiness?

Organisational AI readiness describes an institution's structural preparedness to adopt, govern, and scale AI across its operations. This includes leadership alignment on AI strategy, process mapping to identify high-value AI applications, governance and compliance frameworks, data hygiene and accessibility, and change management capacity. It is the environment into which individual AI capability is deployed.

These two dimensions are not additive; they are multiplicative. A highly AI-capable individual within an unprepared organisation will generate friction, not value. An organisation with excellent AI governance but an unskilled workforce will have policies and no one equipped to act on them.

Personal AI capability comprises four practical competencies:

Prompting and interaction design: The ability to frame requests, provide context, and iterate with AI tools to produce useful outputs.

Tool selection and navigation: Knowing which AI tools are appropriate for specific tasks and understanding their strengths and limitations.

Output evaluation: Critical assessment of AI-generated content for accuracy, bias, tone, and appropriateness. The Harvard/BCG study describes this as operating within a 'jagged technological frontier', where AI capability is uneven across tasks.

Workflow integration: Embedding AI into daily professional tasks in ways that improve rather than disrupt existing processes.

Organisational AI readiness comprises five structural conditions:

Leadership alignment: Executive understanding of AI's strategic role and commitment to resource allocation for adoption.

Process mapping: Identification of which workflows, tasks, and decision points are most amenable to AI augmentation.

Governance and compliance: Clear policies on acceptable AI use, data handling, intellectual property, and risk management.

Data hygiene: Organisational data that is accessible, structured, and of sufficient quality to support AI applications.

Change management: Capacity to manage the cultural, procedural, and structural shifts that AI integration requires.

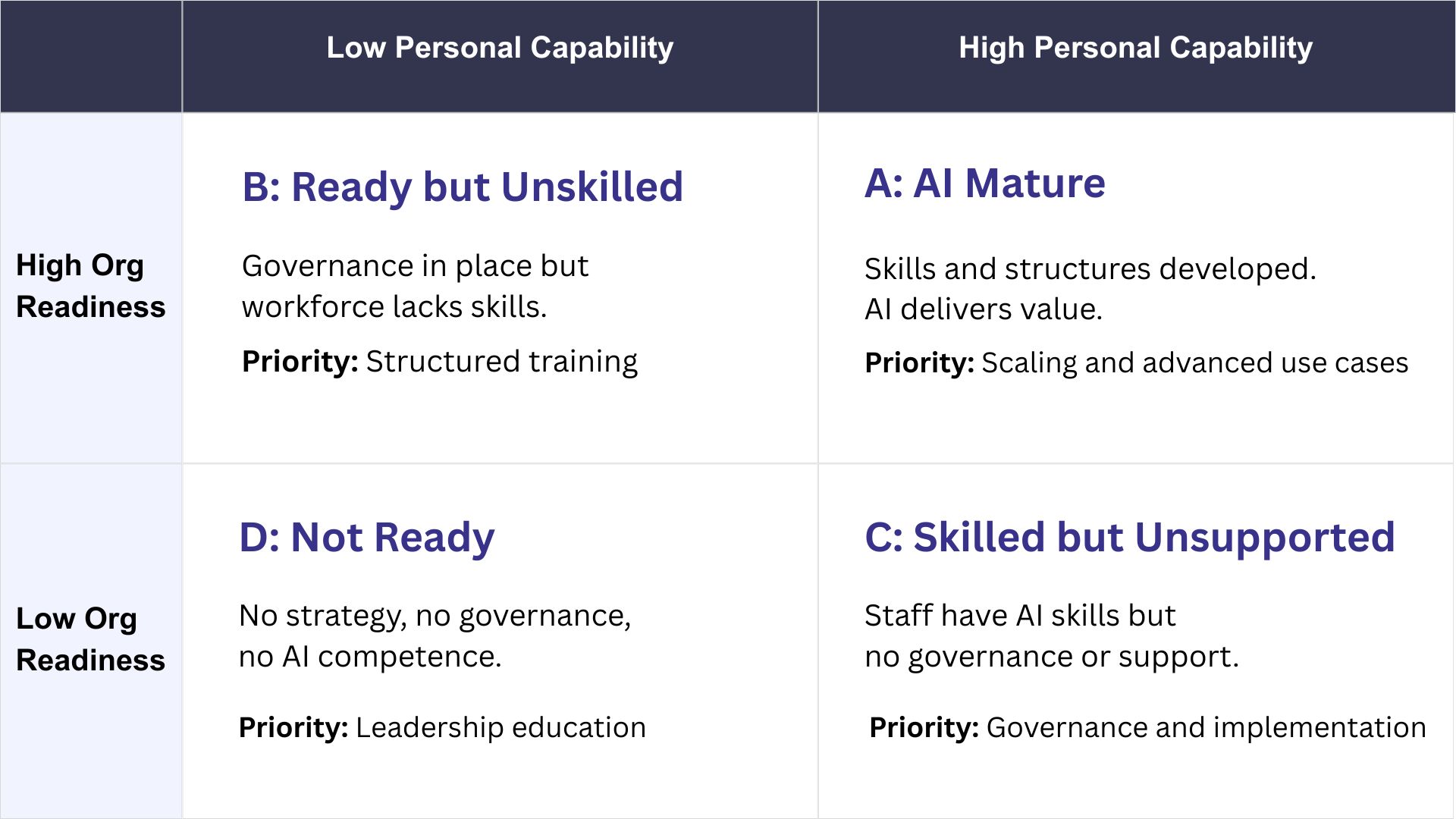

5. The Two-Score Diagnostic Matrix

The matrix below is a sample diagnostic tool for identifying where your organisation sits across both dimensions. Personal AI Capability (horizontal) mapped against Organisational AI Readiness (vertical) produces four quadrants, each with distinct characteristics and intervention requirements. A full assessment of these dimensions forms part of our GRIND Framework (our structured AI readiness methodology), through which we work with organisations to build a clear picture of their AI maturity and the steps required to move forward.

Most organisations beginning their AI journey sit in Quadrant D: no strategy, no governance, and no workforce AI capability. Moving from low to high AI maturity requires progress in both dimensions simultaneously. Investing in individual training without organisational readiness (moving right on the horizontal axis alone) produces Quadrant C: skilled individuals in an unprepared environment. Investing in governance and strategy without workforce capability (moving up on the vertical axis alone) produces Quadrant B: structures with no one to operate them.

The path to Quadrant A (AI-Mature) requires coordinated investment in both. Moving both axes simultaneously requires organisational diagnosis, stakeholder alignment, and programme design: activities that are consulting in nature, not content delivery. This is why AI capability development must be treated as a consulting engagement, not a training purchase.

6. What the Research Says About AI Productivity Gains

The commercial case for structured AI capability development is grounded in a growing body of peer-reviewed and institutional research. When AI is deployed effectively, the productivity gains are substantial, confirmed now by several peer-reviewed studies.

The Key Findings

A widely cited study published in Science by Noy and Zhang (MIT, 2023) assigned professional writing tasks to 453 college-educated workers and randomly gave half of them access to ChatGPT. Those with access completed tasks 40% faster and produced output rated 18% higher in quality. The effect was most pronounced among lower-performing workers, compressing the productivity distribution.

In a study published in the Quarterly Journal of Economics (2025), Brynjolfsson, Li, and Raymond tracked 5,172 customer support agents and found that access to a generative AI assistant increased productivity by 15% on average, with less experienced workers seeing improvements of up to 34%. The research also found evidence that AI facilitated learning, helping newer workers perform at the level of more experienced colleagues.

The Harvard Business School and Boston Consulting Group field experiment (Dell'Acqua et al., 2023) studied 758 consultants and found that for tasks within AI's capability frontier, those using GPT-4 completed 12.2% more tasks and worked 25% faster, with output rated over 40% higher in quality. The study introduced the concept of the 'jagged technological frontier': the finding that AI excels unpredictably across tasks, and that without proper training, workers can perform worse by misapplying AI to tasks outside its strengths.

The Aggregate Picture

Research from the Federal Reserve Bank of St. Louis, using nationally representative survey data from 2024, estimated that workers are on average 33% more productive in each hour that they use generative AI. To contextualise the scale: total US nonfarm labour productivity rose 2.3% across all of 2024 (BLS, 2025). A modelled 1.1% attributable to generative AI use would represent roughly half that gain from a single technology. Penn Wharton Budget Model's synthesis of published AI productivity studies found average labour cost savings ranging from 10% to 55%, with a mean of approximately 25%.

An OECD review of experimental research (2025) found that productivity gains across customer support, software development, and consulting ranged from 5% to over 25%, with less experienced workers generally benefiting more than their senior counterparts.

An Important Caveat

Every major study notes that these gains are contingent on effective deployment. The Harvard/BCG study found that for tasks outside AI's capability frontier, workers using AI performed 19 percentage points worse than those without it. Productivity gains from AI are not automatic. They depend on training, judgement, and organisational context.

The pattern across this research is consistent: AI delivers meaningful productivity gains, but those gains are neither guaranteed nor uniform. They accrue to workers who are properly trained, in organisations that have created the conditions for effective deployment. The productivity upside is real; so is the risk of getting it wrong. What separates the two is the quality of the programme behind the adoption.

7. What Structured AI Capability Development Looks Like

The commercial case is straightforward: a knowledge worker earning £45,000 who gains even a 15% productivity improvement through effective AI use represents £6,750 of additional value per year. For a team of 20, that is £135,000 annually, a multiple of what a structured programme costs. But capturing that value requires more than access to tools. It requires a well-designed programme built to deliver it.

Effective AI workforce training is not a course. It is a structured programme that addresses both personal capability and organisational readiness, delivered within protected working time, using multimodal formats, and connected to measurable implementation outcomes.

Protected Training Time

The evidence on self-directed completion rates is unambiguous: if AI training is optional and relegated to personal time, it will not produce results at scale. Organisations serious about AI capability development must allocate dedicated working hours for training, not as a perk, but as an operational investment comparable to any other infrastructure deployment.

Multimodal Delivery

Effective AI training requires a blend of approaches:

live facilitated workshops for contextual understanding

hands-on practical sessions applying AI to actual workflows

asynchronous reference materials for ongoing support

cohort-based learning that builds internal knowledge networks.

Role-Specific Application

Generic AI training produces generic results. A finance professional, a marketing manager, and a customer service lead all need AI capability, but the tools, use cases, and workflows differ significantly. Structured programmes tailor content to functional context while maintaining consistent foundations.

Organisational Readiness Development

Training individuals is necessary but insufficient. Programmes must also address leadership alignment, governance, process mapping, and change management. Most training programmes stop at the individual. Without leadership that understands AI's strategic role, processes mapped to where AI can add value, and governance frameworks that set clear boundaries, even a skilled workforce has nowhere to direct its capability. This is where the distinction between a training product and a consulting engagement becomes critical.

Implementation Pathways

Training must connect directly to implementation: identifying use cases during the programme, developing proof-of-concept applications, establishing success metrics, and building internal capacity for ongoing adoption. The goal is not knowledge acquisition; it is operational change.

The difference between organisations that achieve this and those that do not is rarely a question of intent but of programme design.

8. Moving Forward

Organisations that lead in the coming years will not be those with the most AI tools. They will be those that have built both the individual and organisational capability to use AI effectively, responsibly, and at scale.

If your organisation is moving from ad-hoc AI experimentation to structured capability development, the starting point is an honest assessment of where you sit on both dimensions: personal AI capability and organisational AI readiness.

LEMA Logic works with organisations to conduct structured AI readiness assessments, design tailored workforce capability programmes, and build the implementation pathways that turn training into measurable operational value. Our approach is consulting-led, research-informed, and focused on lasting adoption rather than one-off workshops.

This is the approach LEMA Logic has taken as a founding training partner on the Digital Isle of Man Activate AI programme. Across 30+ sessions delivered to 300+ Isle of Man professionals, our LearnAI curriculum has addressed both dimensions deliberately. Sessions like "Is Your Business Ready for AI?" develop organisational readiness, while "ChatGPT Unlocked" and "Using AI but Not Trusting the Results?" build personal capability. These sessions were free to attend and delivered in-person, in protected working time, reflecting exactly the model the research supports.

To discuss your organisation's AI capability needs, contact us at [email protected].

9. Further Reading

BCG (2023) 'Reskilling for a Rapidly Changing World' https://www.bcg.com/publications/2023/reskilling-workforce-for-future

Bick, A., Blandin, A. and Deming, D. (2025a) ‘The Impact of Generative AI on Work Productivity’, On the Economy. Federal Reserve Bank of St. Louis. https://www.stlouisfed.org/on-the-economy/2025/feb/impact-generative-ai-work-productivity

Bick, A., Blandin, A. and Deming, D. (2025b) ‘The State of Generative AI Adoption in 2025’, On the Economy. Federal Reserve Bank of St. Louis. https://www.stlouisfed.org/on-the-economy/2025/nov/state-generative-ai-adoption-2025

Brynjolfsson, E., Li, D. and Raymond, L.R. (2025) ‘Generative AI at Work’, The Quarterly Journal of Economics, 140(2), pp. 889–942. https://academic.oup.com/qje/article/140/2/889/7990658

Bureau of Labor Statistics (2025) Productivity and Costs, Fourth Quarter and Annual Averages 2024. U.S. Department of Labor. Available at: https://www.bls.gov/news.release/prod2.nr0.htm

Celik, B. and Cagiltay, K. (2024) ‘Uncovering MOOC Completion: a Comparative Study of Completion Rates From Different Perspectives’, Open Praxis, 16(3), pp. 445–456. https://openpraxis.org/articles/10.55982/openpraxis.16.3.606

Dell’Acqua, F., McFowland III, E., Mollick, E. et al. (2023) Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality. Harvard Business School Working Paper No. 24-013. https://www.hbs.edu/faculty/Pages/item.aspx?num=64700

Jordan, K. (2015) ‘Massive open online course completion rates revisited: Assessment, length and attrition’, International Review of Research in Open and Distributed Learning, 16(3), pp. 341–358. https://www.irrodl.org/index.php/irrodl/article/view/2112

Martins, P.S. (2021) ‘Employee training and firm performance: Evidence from ESF grant applications’, Labour Economics, 72, p. 102056. https://doi.org/10.1016/j.labeco.2021.102056

Noy, S. and Zhang, W. (2023) ‘Experimental evidence on the productivity effects of generative artificial intelligence’, Science, 381(6654), pp. 187–192. https://www.science.org/doi/10.1126/science.adh2586

OECD (2025a) Bridging the AI skills gap: Is training keeping up? OECD Artificial Intelligence Policy Brief. https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/04/bridging-the-ai-skills-gap_b43c7c4a/66d0702e-en.pdf

OECD (2025b) ‘Unlocking productivity with generative AI: evidence from experimental studies’. https://www.oecd.org/en/blogs/2025/07/unlocking-productivity-with-generative-ai-evidence-from-experimental-studies.html

OECD (2026) ‘Making AI Work: Why Investing in Skills Matters’. https://www.oecd.org/en/blogs/2026/01/making-ai-work-why-investing-in-skills-matters.html

Penn Wharton Budget Model (2025) The Projected Impact of Generative AI on Future Productivity Growth. https://budgetmodel.wharton.upenn.edu/issues/2025/9/8/projected-impact-of-generative-ai-on-future-productivity-growth

Reich, J. and Ruipérez-Valiente, J.A. (2019) ‘The MOOC pivot’, Science, 363(6423), pp. 130–131. https://www.science.org/doi/10.1126/science.aav7958

Stanford Institute for Human-Centered Artificial Intelligence (2025) The 2025 AI Index Report. https://hai.stanford.edu/ai-index/2025-ai-index-report

University of Michigan Record (2023) ‘Research shows certificates boost MOOC completion rates’. https://record.umich.edu/articles/research-shows-certificates-boost-mooc-completion-rates/